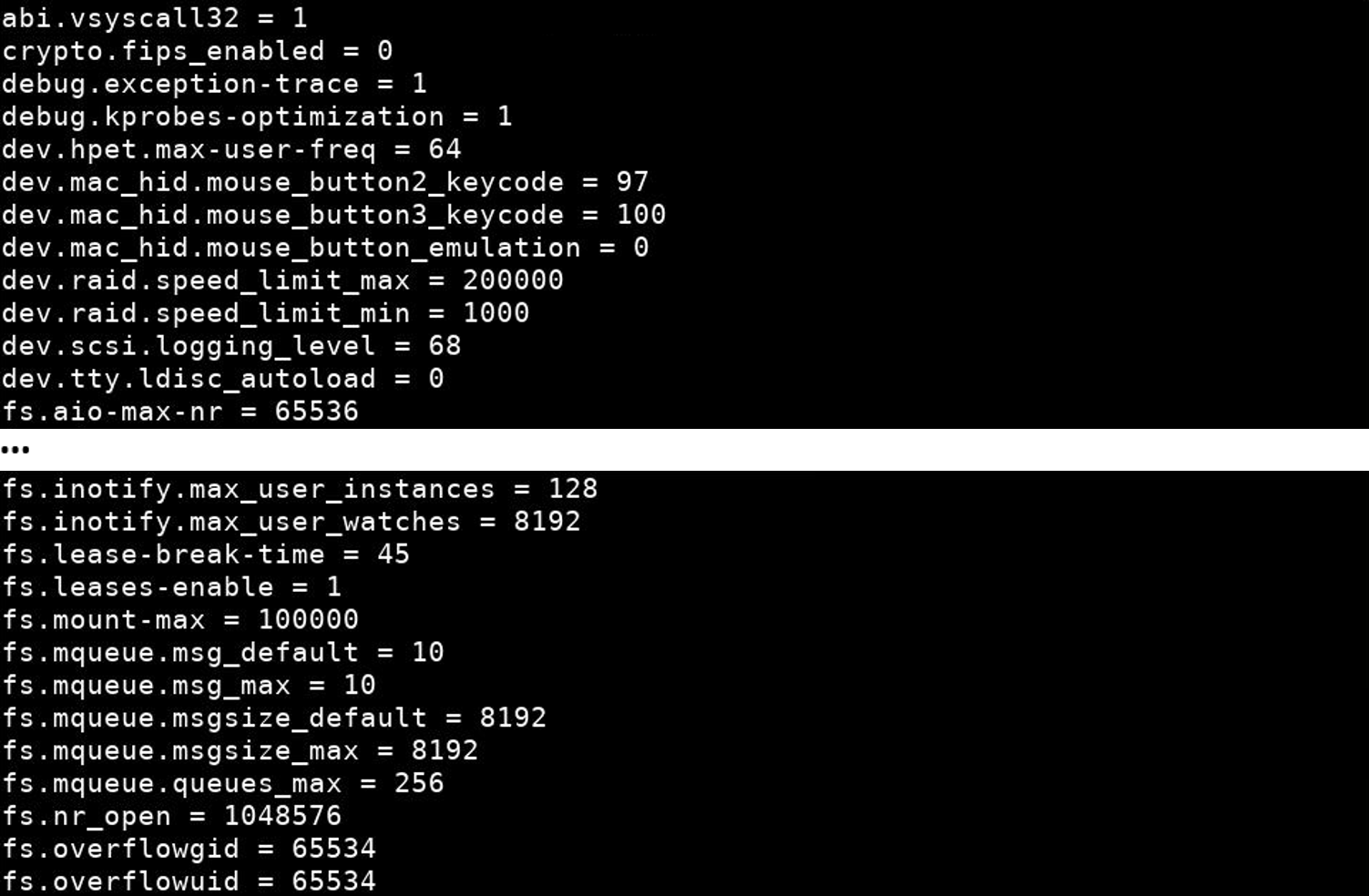

Potentially the application could theoretically start with what memory is available now, but it may continue to require memory to a point the system is unusable as a result and hangs or crashes. So now when we try to open a memory hungry application, or we have to many applications open already, the new application is refused with a notification that IE: ‘file manager failed to fork’, or failed to start because there isn’t the available memory. Sudo sysctl -w vm.overcommit_memory=2 - only allow applications to start if there is enough memory determined by the above command Sudo sysctl -w vm.overcommit_ratio=99 - use 99% of physical memory Sudo cat /proc/meminfo - check that Committed_AS is below CommitLimit Sudo sh -c “sync echo 3 > /proc/sys/vm/drop_caches” - this drops all caches from RAM Sudo sync - this tells any files in cache on RAM to write to disk now We can change the above settings by entering the following into terminal: Vm.overcommit_memory=2: This tells the kernel to never agree to allocate more than the total percentage of actual memory determined by overcommit_ratio= and disables the OOM-killer daemon. overcommit_ratio=50 would mean 1gb could be allocated to applications – this would obviously not be a sensible choice as 1gb would never be used!) (IE: RAM=1gb & SWAP=1gb, overcommit_ratio=100 would mean 2gb could be allocated to applications. This might be the total of RAM + SWAP, or just RAM if you have no SWAP. Vm.overcommit_ratio=100: The percentage of total actual memory resources available to applications. To solve the random selections of the OOM-killer potentially killing off a critical system process, or not kicking in prior to a kernel-panic, we can change the following: By then though a kernel-panic (or at best X11 would hang) might have happened resulting in a frozen system (aka blue-screen in MS terms) or of course OOM-killer killed a critical system process.

This would mean that the OOM-killer would kick in to try and free up actual memory by killing running processes it thinks might help to free up memory. If the application/s needed what they originally asked for, an out-of-memory or ‘OOM’ would happen. MemFree: The total amount of physical RAM not being used for anything.ĬommitLimit: The total amount of memory, both RAM and SWAP, available to commit to the running and requested applications (not necessarily directly related to the actual physical RAM amount, we will see why later).Ĭommited_AS: The total amount of memory required in the worse case scenario right now if all the applications actually used what they asked for at startup! MemTotal: The total amount of physical RAM available on your system. We can see lots of lines but the four we’re interested in are: To see your memory system now, under ‘default’ settings, enter the following into terminal: The stock linux kernel settings kind of just agrees to the applications request without checking if the actual resource, or the hardware, could support the total requested memory in that worst case scenario, partly because most applications never need what they ask for. Most applications ask for more memory than they might actually need to startup, some of this is down to bad software design, or they expect that you’ll need that much at some point in the future….a sort of “this is my worst case scenario requirement of RAM, and i’ll tell you that now before we start!”

Today I delved into the underworld of Linux memory allocation, in particular into overcommitting memory (RAM).Īfter a couple of X11 hangs I decided I needed to learn a little more about the various settings that come as stock with the Linux kernel, to try to tame them, or at least reduce or stop these annoying hangs followed by reboots!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed